In this blog, I evaluate misinformation education tools:

First, let’s talk about RumorGuard. It is a tool that teaches users how to identify misinformation by analyzing real-world examples using factors like source, evidence, context, authenticity, and reasoning. Bad News, on the other hand, is an interactive game where you play as a fake news creator, learning how misinformation spreads through techniques like emotional manipulation, impersonation, and polarization while gaining followers based on your posts.

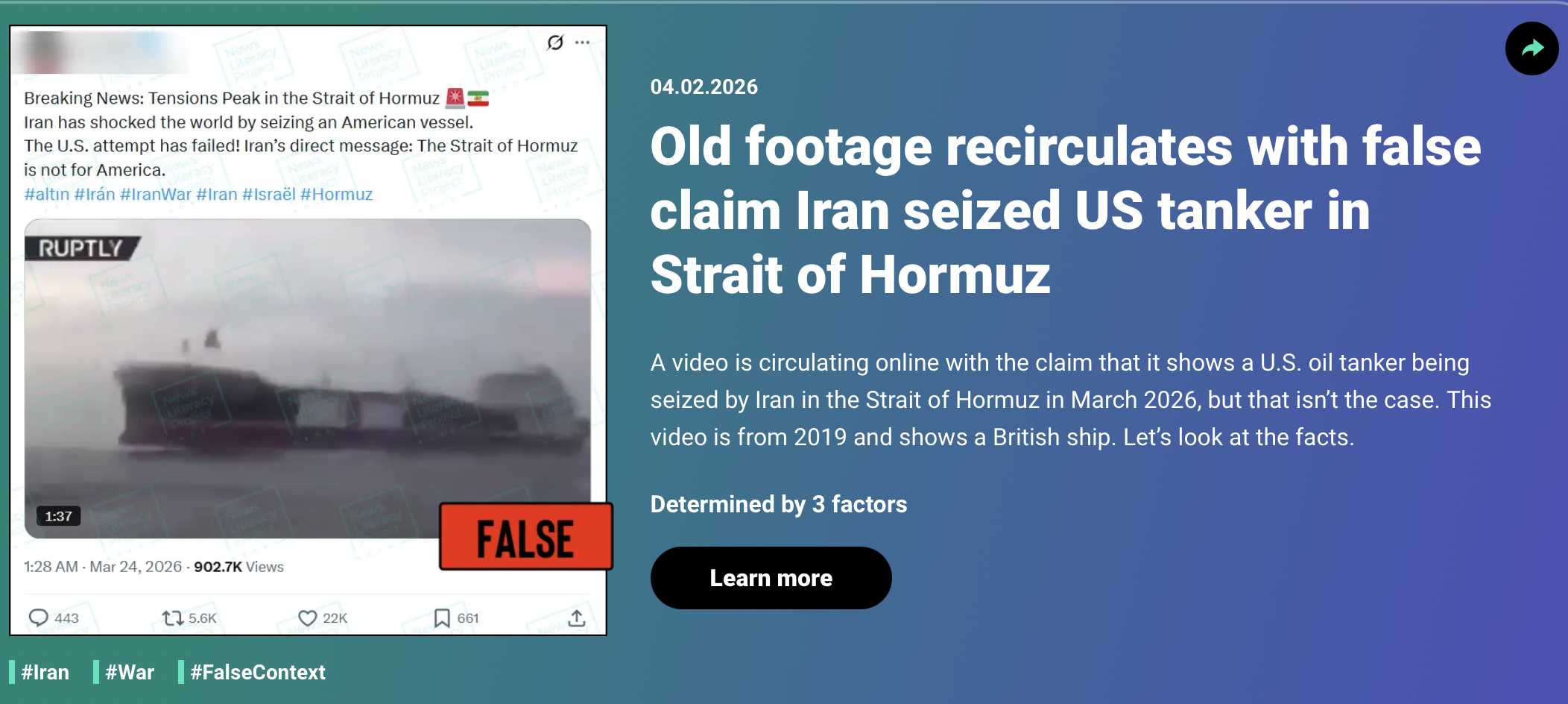

I think RumorGuard is a very helpful tool for learning how to spot misinformation and what factors we need to consider before believing something is real or fake. For example, in one of their cases about a U.S. tanker in the Strait of Hormuz, the platform lays out five key factors to evaluate the credibility of the information: source, evidence, context, authenticity, and reasoning. In that specific example, using the first three factors was enough to identify the claim as false. Overall, RumorGuard is a strong resource because it allows users to interact with real-world examples instead of just reading about misinformation in theory. This makes it easier to understand how misinformation actually appears online and how to evaluate the accuracy of content in a practical way. Below is an example image demonstrating how RumorGuard helps identify misinformation using real-world examples.

Image Source: RumorGuard

In my evaluation, I think RumorGuard is a very useful tool for learning how to identify misinformation, although it can feel slightly confusing at the beginning due to its interface. The platform has different tabs that have different kinds of content in them, such as conspiratorial thinking, false context, and AI. The website interface requires some attention to understand how to navigate. However, once I got familiar with it, it became more engaging and easy to use. I found the real-world examples especially helpful, as they made it easier to understand how misinformation appears and how to evaluate it.

The most useful part of the tool was learning the five key factors used to assess information, which provide a clear and structured way to analyze content. I also think it would be very beneficial for someone with no prior knowledge about misinformation, since it uses real examples instead of just theory. Compared to the Bad News game, I think RumorGuard is more effective because it focuses on actual cases rather than simulations. At the same time, it feels more like a fact-checking approach, where users analyze information after it is presented. This reflects findings from Scientific American, which show that fact-checking can reduce misinformation but does not completely eliminate it. In my opinion, while RumorGuard is helpful, it is not enough on its own, and people still need real-world exposure to misinformation to fully develop their ability to recognize it.

I chose to use Bad News as my tool. It starts with an introductory message that says, “You're here for the position of disinformation and fake news tycoon, is that correct?” The game makes it clear that this is a simulation and not real world posting. It then asks you to post a tweet by choosing from a few options, and based on your choices, you begin to gain followers and influence.

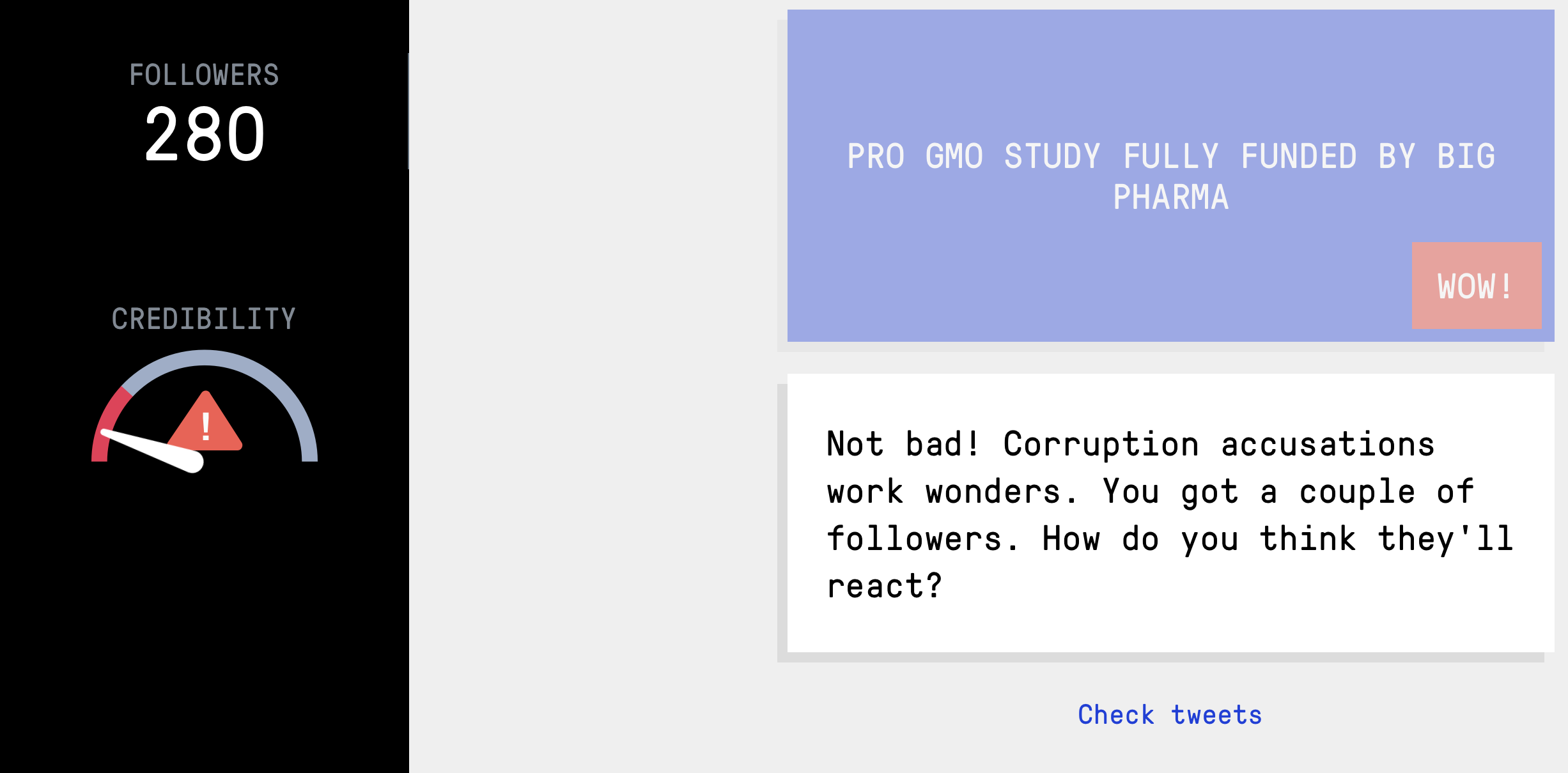

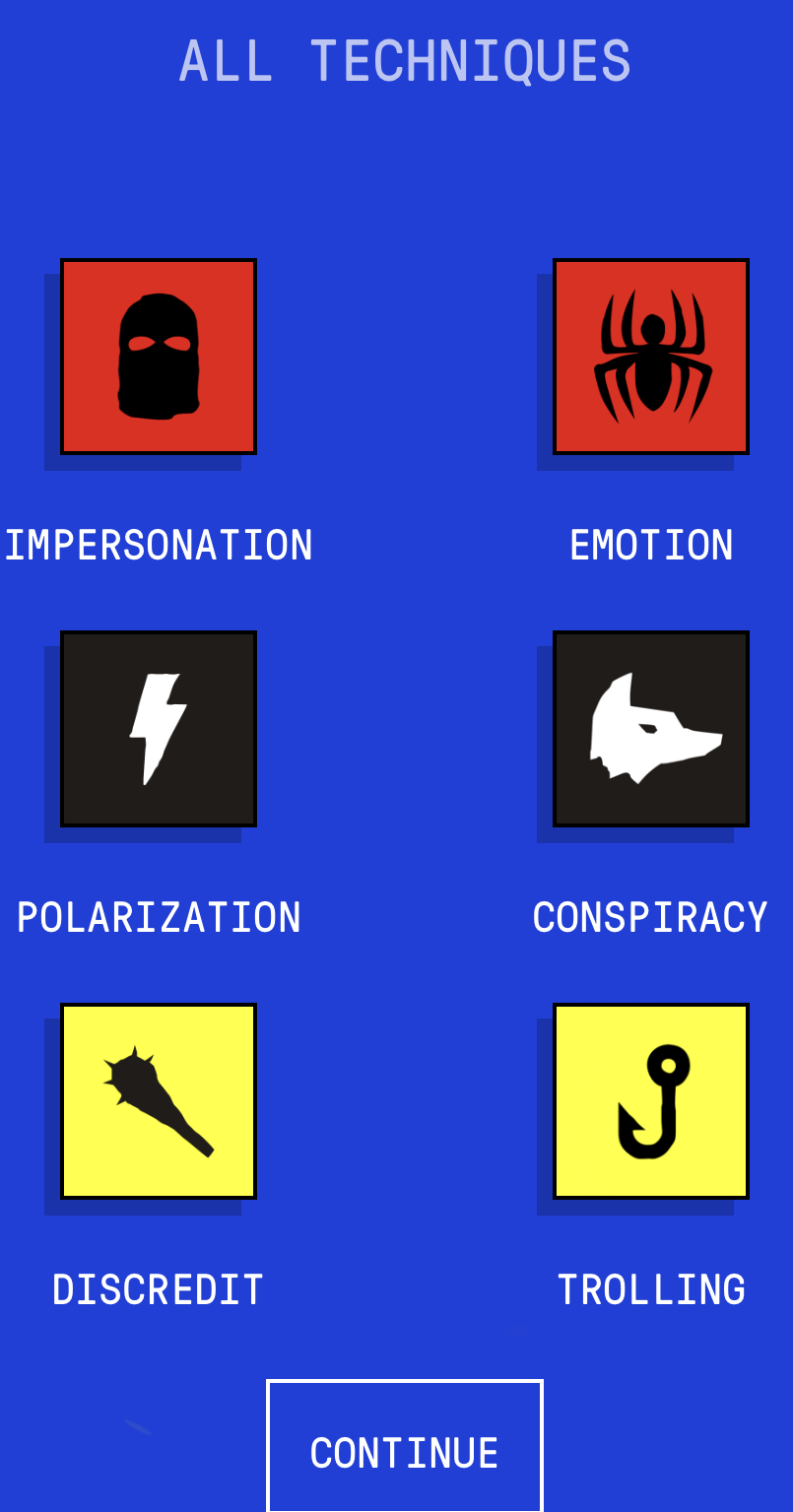

The game has six stages, each focused on a specific misinformation technique: impersonation, emotion, polarization, conspiracy, discrediting, and trolling, as shown in the image to the right. In each stage, you are given multiple options for posts, and your choices affect how your followers react. A meter on the side tracks your follower count and credibility, showing how misinformation can spread depending on the type of content you post. One example from my gameplay was when I impersonated NASA using a slightly altered name like “NÄSA” and posted a false alert about a meteorite. Some users believed the post and reacted with panic, which increased my reach. Overall, the tool teaches how misinformation spreads through emotional content, fake credibility, and audience reactions. Below are two images from the game. The image on the left shows the meter used to track followers, and the image on the right shows how the game allows the player to choose between different tweet options.

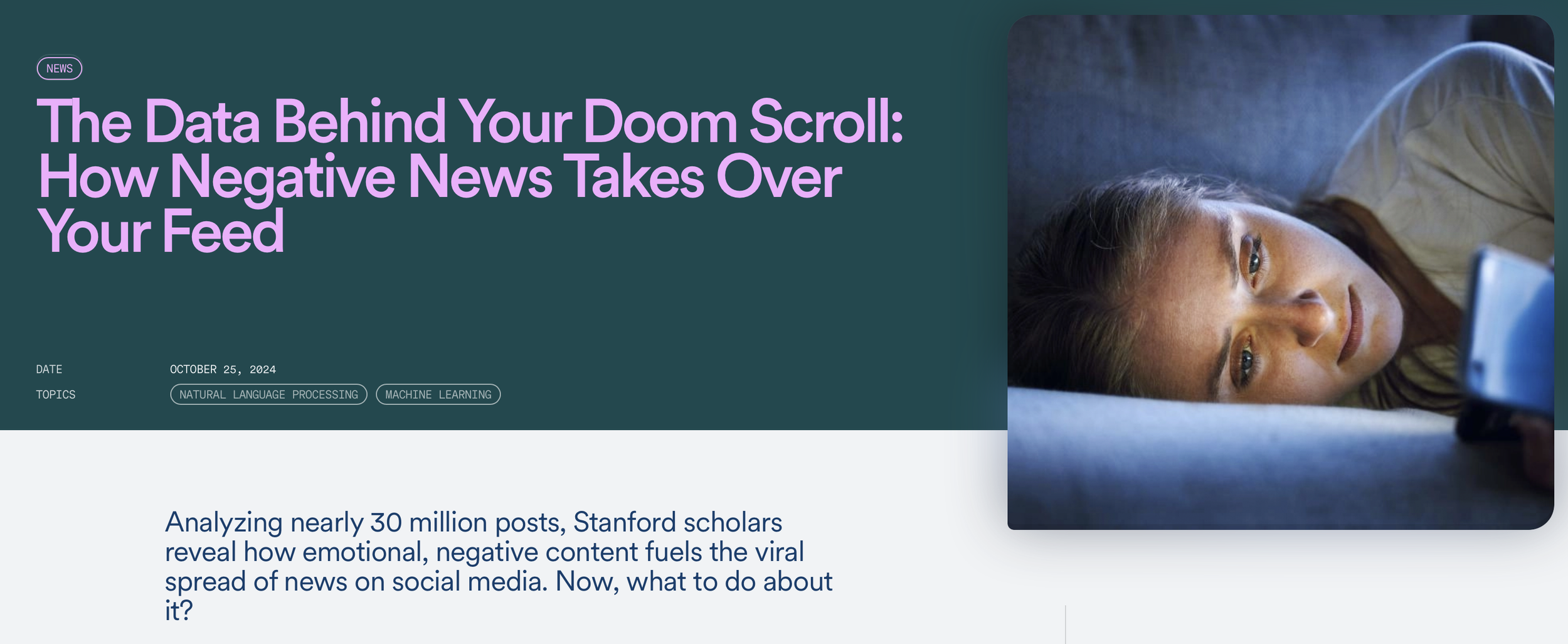

In my evaluation, after completing all six stages of the game, I think Bad News does a very good job of showing how misinformation spreads on social media. The game clearly demonstrates how emotionally charged content tends to get more reactions and engagement, which increases follower count, while less engaging content does not perform as well. This connects with my research from the Stanford Institute for Human-Centered Artificial Intelligence, which shows that negative and emotional content is more likely to go viral. The study found that posts designed to trigger emotions like fear, anger, or anxiety tend to get more engagement and spread more widely. This helps explain why misinformation often spreads quickly, since it is frequently framed in an emotional and attention grabbing way. The game also introduces the role of fact-checkers, where responses to misleading posts can reduce follower count and limit the spread of misinformation. This aligns with findings from Scientific American, which show that fact-checking helps reduce misinformation, even though it has limitations.

Image source: Bad News

Image Source: Stanford University

Image source: Bad News

Additionally, research from The Washington Post explains that misinformation spreads very quickly, especially when it involves conspiracy theories. The article also mentions that people often react emotionally and share content without verifying it. This connects with the conspiracy stage in the Bad News game, where the game shows how exaggerated or misleading narratives can help gain more attention and followers.

Research from the World Economic Forum also shows that the Bad News game helps people get better at recognizing fake news and manipulation techniques by exposing them to these strategies in advance. However, the Bad News game does have some limitations, as it mostly uses short text-based posts and does not include visuals like images or videos, which are common in real world misinformation. In my opinion, tools like this are helpful for learning about misinformation, but they are not enough on their own, and people still need real world exposure to fully understand how misinformation spreads.

Image source: World Economic Forum

Image source: Bad News

Image shows six stages of the game

Blog Post 2: Evaluating misinformation education tools